Overview

Plugin Manager

Base container

Web Application Firewall

Bastillion

Speed Test

Datadog

Network Intrusion Detection Plugin with Suricata

PingProxy Driver

PingProxy

Do Name Stuff

ntop

HA Proxy

CloudWatch Logs

Let's Encrypt

OWASP ZAP Container

VNS3 HA Plugin

Logger plugin

PacketLoss

Overlay EngineBase container

Table of Contents

Configurable Default Container

VNS3 Base overview

The VNS3_Base plugin uses a small footprint Ubuntu 20.04 LTS image as its operating system. Customers are welcome to provide their own containers based on other Linux distributions compatible with the kernel used in their VNS3 edition.

VNS3_Base has the unattended-upgrades package from Ubuntu which can be configured to automatically install security patches from the public repositories.

VNS3_Base is deployed to VNS3 using the “Containers” mechanism. These instructions cover customisation of the container image that will be used so that customer access keys and and other software installations can be performed.

Please be familiar with the VNS3 Container configuration guide which can be found on the Cohesive Networks Product Resources page.

Getting the Default VNS3_Base Container

The default Linux (Ubuntu 20.04) Container is accessible at the following URL. Released February 16th, 2023: https://vns3-containers-read-all.s3.amazonaws.com/VNS3_Base/vns3_base_2004_20230216.tar.gz

This is a read-only Amazon S3 storage location. Only Cohesive Networks can update or modify files stored in this location.

This URL can be used directly in a VNS3 Controller via the Web UI or API to import the container for use into that controller. (General screenshot walkthrough and help available in the Container System Overview document.)

(For older base OS needs, the following images remain available but are not being actively updated or maintained.)

https://vns3-containers-read-all.s3.amazonaws.com/VNS3_Base/VNS3_BASE_1804.tar.gz

https://vns3-containers-read-all.s3.amazonaws.com/VNS3_Base/VNS3_BASE_1404.tar.gz

https://vns3-containers-read-all.s3.amazonaws.com/VNS3_Base/VNS3_BASE_1204.tar.gz

Getting the VNS3 Base Container

From the Container —> Images menu item, choose Upload Image.

To use the pre-configured VNS3 Base paste the URL from the previous page into the Image File URL box.

When the Image has imported it will say Ready in the Status Column.

To then launch a running VNS3_Base container, choose Allocate from the Action menu.

Launching a VNS3 Base Container

After selecting Allocate from the Actions menu you then name your container, provide a description and the command used to execute the container.

The name and description should be something meaningful within the context of your organization and its policies.

In MOST cases the command used to run plugin containers will be: /usr/bin/supervisord

However, this may vary with individual containers, please consult each one’s specific documentation.

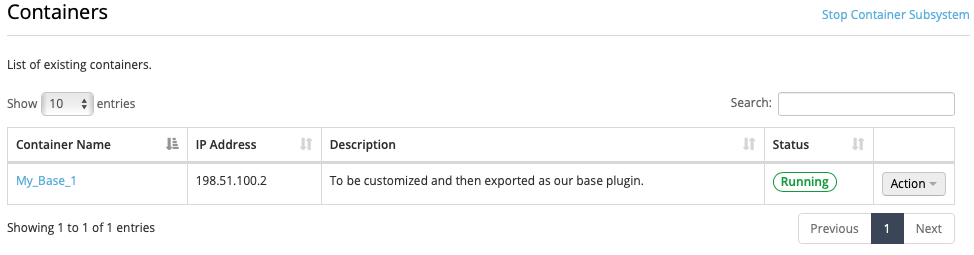

Confirming the VNS3 Base Container is Running

After executing the Allocate operation you will be taken to the Container Display page.

You should see your VNS3_Base Container with the name you specified. The Status should be Running and it should have been given an IP address on your internal container subnet (in this case 192.51.100.2).

Customizing VNS3 Base Plugin

Accessing the VNS3_Base Container

Accessing a Container from the Public Internet or your internal subnets will require additions to the inbound hypervisor firewall rules with the VNS3 Controller as well as VNS3 Firewall.

The following example shows how to access an SSH server running as a Container listening on port 22.

Network Firewall/Security Group Rule

Allow port 22 from your source IP or subnets.

VNS3 Firewall

Enter rules to port forward incoming traffic to the Container Network and Masquerade outgoing traffic off the VNS3 Controller’s outer network interface.

#Let the Container Subnet Access the Internet Via the VNS3 Controller’s Outer or Public IP

MACRO_CUST -o eth0 -s <VNS3_Base Container Network IP> -j MASQUERADE

#Port forward port 44 to the Container port 22

PREROUTING_CUST -i eth0 -p tcp --dport 44 -j DNAT --to <VNS3_Base Container Network IP>:22

Securing the VNS3_Base container

By default the container has the following accounts, configured as described.

“root” - The root account is locked. The root account is not allowed to remote shell into the container. This is our recommended approach. However, if you wish to, you can use the “container_admin” account to unlock root, provide a root password, and edit /etc/ssh/sshd_config to allow remote login by root.

“container_admin” - The default password is “container_admin_123!” The default demo public key is also installed in the /home/container_admin/.ssh/authorized_keys. PLEASE change this password and this key when configuring, or create a new default plugin container image as your base for future use, following your authentication procedures. The account “container_admin” has “sudo” or superuser privileges, and is allowed to remote shell into the container.

By default the VNS3 Base container is configured for username/password login. You can customize the container for SSH key usage by allocating an instance of the container, modifying sshd_config to not allow password authentication, entering your public key into /home/container_admin/.ssh/authorized_keys and save a new custom base image for your use.

Accessing via the default private key

Here is the default private key for initial login:

-----BEGIN RSA PRIVATE KEY-----

MIIEoAIBAAKCAQEA1pIQ/2VxIR6DJx4/mKKfZJ2EuhAe+jJaXnbYMq33Zryum5ku

/r7KKcgR97R7GV0McHo23BJP/SoQrbyvIwRVBurnH32Okxl/ymX0YeudOlLh2/R/

palDnPVOtuQnY836poGxp3/X2H86/MgrHOclbeGy8Ezm6+zwnl18VccqiGYMW06c

a2qLGVMIh6WD03/p++l+QEPRmhAzfqWZJ02GG12lCK7ECODRELR0Y+ppe+yg2DaF

QI8gywRDa6l9v7BTEc5l/k3j2xqJxNXaBVzgjCJmVc7dfgfR1io31IHiTw1M8YPf

5lNpMdfiV4DjcG9f6GcUuO6uXgMZucnQT3ldfwIBIwKCAQAGIW4zLsi3zav5zaoL

rN/7j3jSHbe+AXBL14KFGunPvD+AydzFcypY9xZ0yqRucF9w7YyJ8eUHO7dVa8p9

V+UsFVcPhz6WfRJHnINTQT8Bqpi9JD4pTfqeFaMpzAEgG9P2IPZyf/7aTMcryzRu

ikLl4eCKhdq2SJkpGJ0nBbDCEX3p8H9jDWKlPxZ4vEbeqZeDMV+PPhVjUtrElAMB

amJY3/WmGPRH90pOO47vnZ+rSd/GLDpEuGYvjU7F64cBZUQbf4rYTCGW3dCyuw5g

iChEeiOvbYEYRffEh0/fv3Bn31qFteeY7HXOSAGrRm/KuUxejkTTs3RZBOjFLmBj

UuCrAoGBAPbWMrEueimj0zQcfxBlKFaph0DQQTFEXg0evgv+RitXdChooB9SmOe2

sOYbY36DX6V6QTzNsHOEoLuqdShPi3a9JIDyOAXdIBMTqt2SvywRBPJQffFoCJ+/

AbrfVr6Seu45C5t+aYlS8nULbphqp8Cvyof4ldV+5KyGtbllaNlPAoGBAN6JOoCy

G+Td38HpaML9J9xioizahbPBXj1/qyP3e+idSubqpT7feMCn3wOF+haNc2NF6VEN

qLTGEcKyAOA/TIySOel5rUZdpu5BmAVAADMeapMJWEXEblI4qJFd/sWJCP5wmZp/

lcSrDTLhcQJOci5LKSPOz/Czcpo9vOlVu8zRAoGAd+Rhw8YeFDmhGU+rbl0E9uSg

x7WcAfyitepcTvfY8HrvRtO7fO2aubCBztoaYgVLtsZaM3nZXK4iL0QqRseM4ebX

N1ET5ZdKF+T7OGvZMqkuSc9THXusatkeGPAi0Zeay3rLH6PM3EzcKjjAsG5RetkK

mdCDSnDVeF6wCZen9IUCgYAMt2JtwQjogbUDxDHfQaqBnzx3l3VaupervicJXpld

v9hk93coKgbmb/4ddV6/dcwUTSNGdc8gRdUhEXxklecd+boqmT0Z9rkU7c4sL4r7

m1aMDymdljIwlYX5rZmHoW46bNWTzMa6x/IgKiO2/SsYlpSi9d//IDJvNrpWee15

awKBgAczjW0Ag+nosFzklHhDAWIEZ+qgvdMcXf8pTOzgo0wyOl4SYTccp82Ffxee

25d8DyolvGgRjfDXKMyw7zfzwiknsZozEGNFDW+sgsPR9Pe1SQx07PtnUUflb3/C

v5LiLZmgW+RFvQf7lGqQpQSpfPuY6H8vwjxlA89SP3UwTi4N

-----END RSA PRIVATE KEY-----

Primary files for customization

There are two significant files for securing the VNS3_Base container:

/etc/ssh/sshd_config

Please ensure this file is configured to your organization’s best practices.

/home/container_admin/.ssh/authorized_keys

The base container comes with an example public key installed, and private key for use in VNS3 documentation. Please remove after initial use or programmatic configuration.

Putting it all together - Using the built in TCP tools

TCP Utilities for Traffic Analysis

One of the more difficult parts of application deployment, connectivity and security in the cloud or virtual environments is the virtual infrastructure environment is not well suited to providing customers with the direct network flow to their device.

The VNS3_Base can be used to build other container plugins, but has the iftop and tcpdump utilities built in. Both utilities take a -f argument which allows libcap syntax, but

display results in different ways.

To see traffic coming into your container in a graphical (curses-based) view you could

execute from a shell: iftop -n -N -i eth0 -f “not port 22

To see individual packet information in a scrolling display use: tcpdump -pni eth0 -f “not port 22

VNS3_Base Container Flow

User or interior traffic arrives at the VNS3 Controller. Firewall rules can filter and send a subset of traffic to the VNS3_Base container for analysis.

Forwarding network traffic to the VNS3_Base Container

If you are using the base container to analyze traffic flowing through your VNS3 controller, you will need to forward a copy of that traffic to your container.

Forwarding traffic to the container is done with the use of firewall rules. An example is given below:

VNS3 Firewall

Enter rules to send a copy of either incoming traffic (arriving on eth0 or tun0) or outgoing traffic (leaving eth0 or tun0).

#EXAMPLE: Copy all incoming tun0 (Overlay Network) traffic to the NIDS container.

MACRO_CUST -j COPY --from tun0 --to <Container Network IP> --inbound

#EXAMPLE: Copy all outgoing eth0 (Underlay Network) traffic to the TCP Tools Container

MACRO_CUST -j COPY --from eth0 --to <Container Network IP> --outbound

Updated on 11 Feb 2020